Azure Function setup & trigger

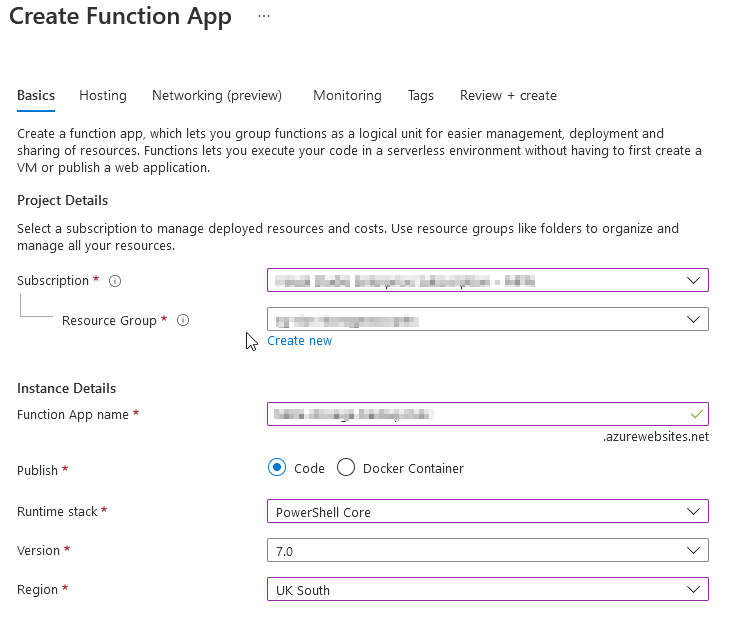

Firstly, we’re going to create an Azure Function using runtime of PowerShell in a Code publish method. I’m using a consumption plan type to reduce costs.

I’ve selected an existing Storage Account under ‘Hosting’ when creating my function which holds my current container I want to copy from for simplicity. However, you can create a new Storage Account instead to keep the Azure Function containers separate from your existing Storage Account.

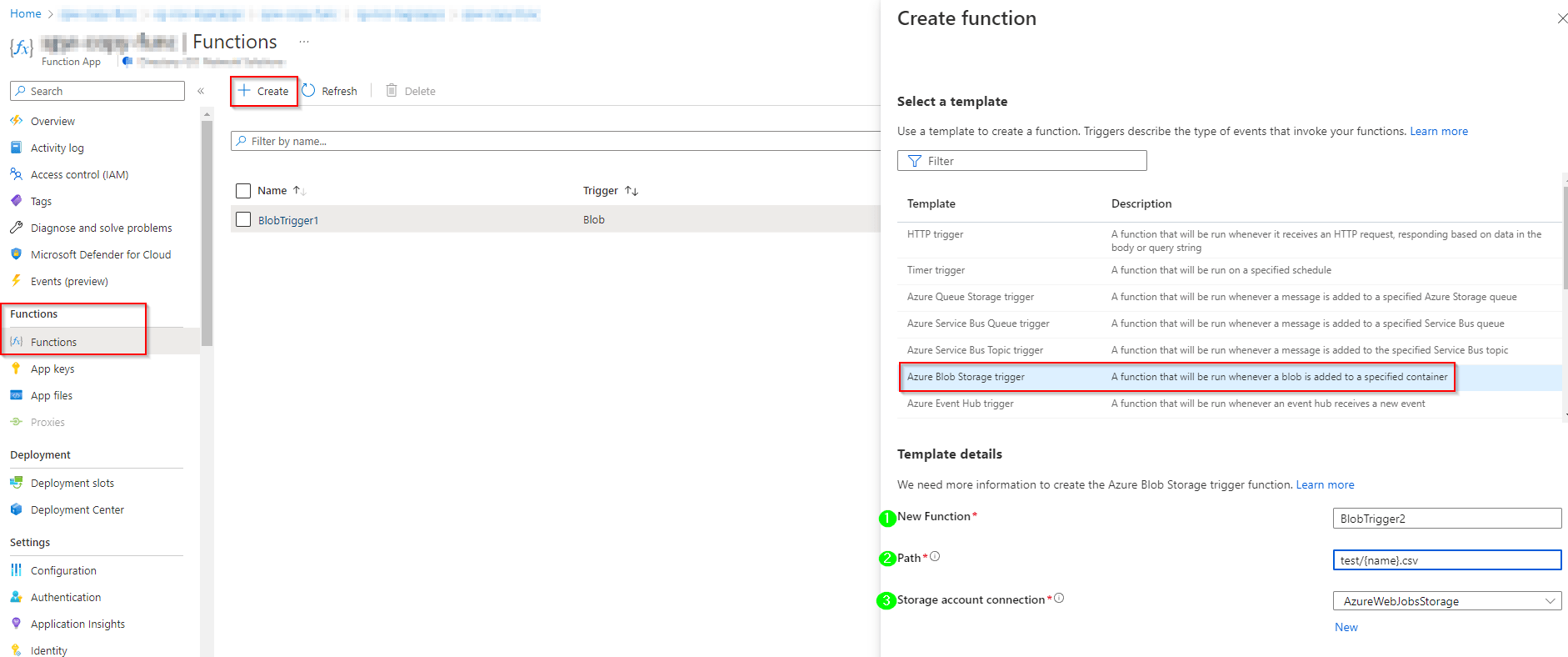

Once the deployment completes we want to create a trigger so the function will run when a new blob is uploaded to the container.

Trigger setup and config

There’s a handy event trigger for Azure Blob Storage which is perfect for this solution.

When selecting a blob storage trigger we’re presented with some additional options for the trigger criteria:

Name: This is the name of the trigger

Path: This will be the path to the container/blob, in my example you can see I’m using ‘test/{name}.csv‘. This is so it only triggers on filenames with a .csv extension that is uploaded into the container ‘test’.

More on blob name patterns can be found here Azure Blob storage trigger for Azure Functions | Microsoft Learn

Storage account connection: My connection is ‘AzureWebJobsStorage‘ as I’ve linked my Function to an existing Storage Account for this (as mentioned in the intro). But if you want to select a different storage, click on ‘New’ and select the Storage Account you want for the trigger source. More on that here Azure Blob storage trigger for Azure Functions | Microsoft Learn

Finally, create the trigger and wait for deployment to complete.

Uploading AzCopy

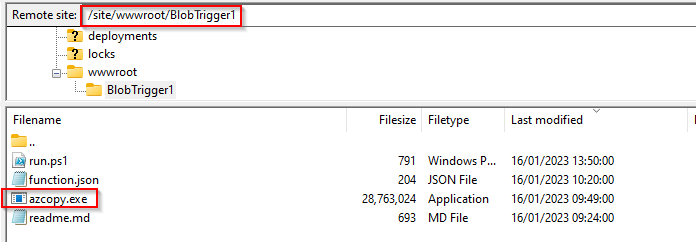

Next we need to upload AzCopy executable into the Function so it can be invoked when the function is triggered.

We will be using AzCopy v10. This can be downloaded from the Microsoft website here.

A quick recap on how to do this:

- Go to your Azure Function and locate Deployment on the left navigation pane

- Click Deployment Center and locate the FTPS credentials tab

- Copy the endpoint, username & password to connect to the Azure Function via an FTP client such as FileZilla

- Lastly, lets drag & drop azcopy.exe from your machine to /site/wwwroot/BlobTrigger1 (or what the trigger name is set to)

AzCopy

Then there is the AzCopy copy command so when the Function is triggered it actions the copy required. AzCopy version 10 natively supports blob storage copy which makes this quite simple to script.

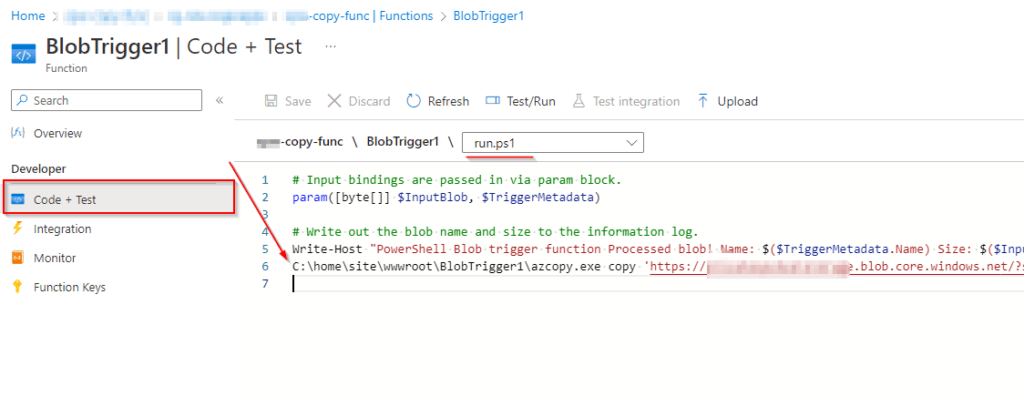

- In the Azure Function, go to Functions and select the blog storage trigger that has been created.

- Once in the trigger go to Code + Test with a dropdown to select the ‘run.ps1’ file – this is what executes when the function is triggered.

3. Below the default bindings and log output we can enter our AzCopy command.

My working example looks like this:

C:\home\site\wwwroot\BlobTrigger1\azcopy.exe copy

'https://<source-storage-account-name>.blob.core.windows.net/<container-name>/<SAS-token>'

'https://<destination-storage-account-name>.blob.core.windows.net/<container-name>/<SAS-token>'

--recursive --include-pattern *.csv

In my example, I’m using two Blob SAS URLs that I have generated on each Storage Account (here’s a guide on how to do this). I’ve selected the allowed resource types: container and left the rest of the defaults.

On my AzCopy command I’m using –recursive to ensure I copy across the same folder structure from the source. The –include-pattern *.csv instructs only the CSV files within the structure to be the files that get copied across.

Alternatively, you can use many different other pattern combos to match different requirements, such as filename matches and more. Copy blobs between Azure storage accounts with AzCopy v10 | Microsoft Learn

Note: Shared Access Tokens is the only supported method for this AzCopy functionality. It appears I cannot utilise a managed identity from my testing. There is no way to authenticate in AzCopy within the Function like how you can with the Az PS modules. Let me know if you know a way around this, I was unsuccessful!

More info on how to create a Shared Access Token for a storage account: Create a service SAS for a container or blob – Azure Storage | Microsoft Learn

Testing

Lets start by testing the functionality.

I’m matching only against .csv files for both trigger events & the AzCopy copy command.

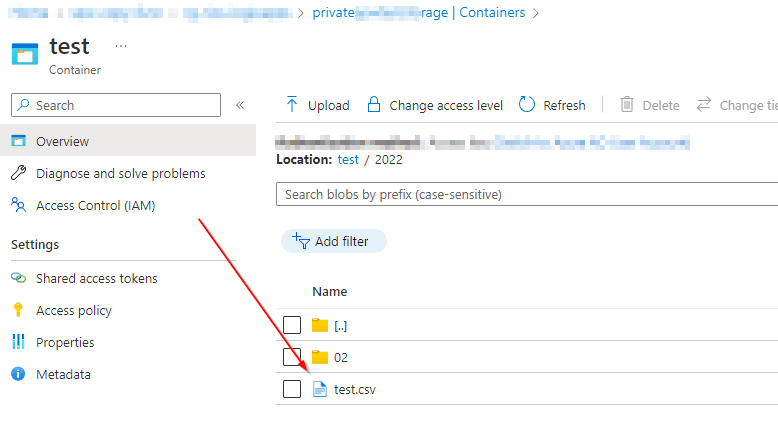

- Upload a test.csv to Storage Account 1 (source).

- The function will trigger.

- This then executes our run.ps1 AzCopy command which will perform the copy.

Upload test.csv in the Azure Portal GUI to Storage Account 1:

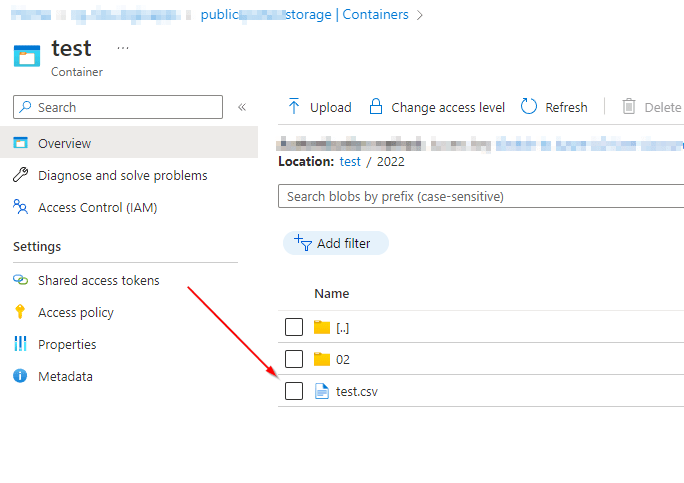

After a few minutes I can see that the function has executed on the overview page (under Function Execution count). In my destination Storage Account Container I can review and check the copy was successful as the test.csv now appears.

Checking the logs

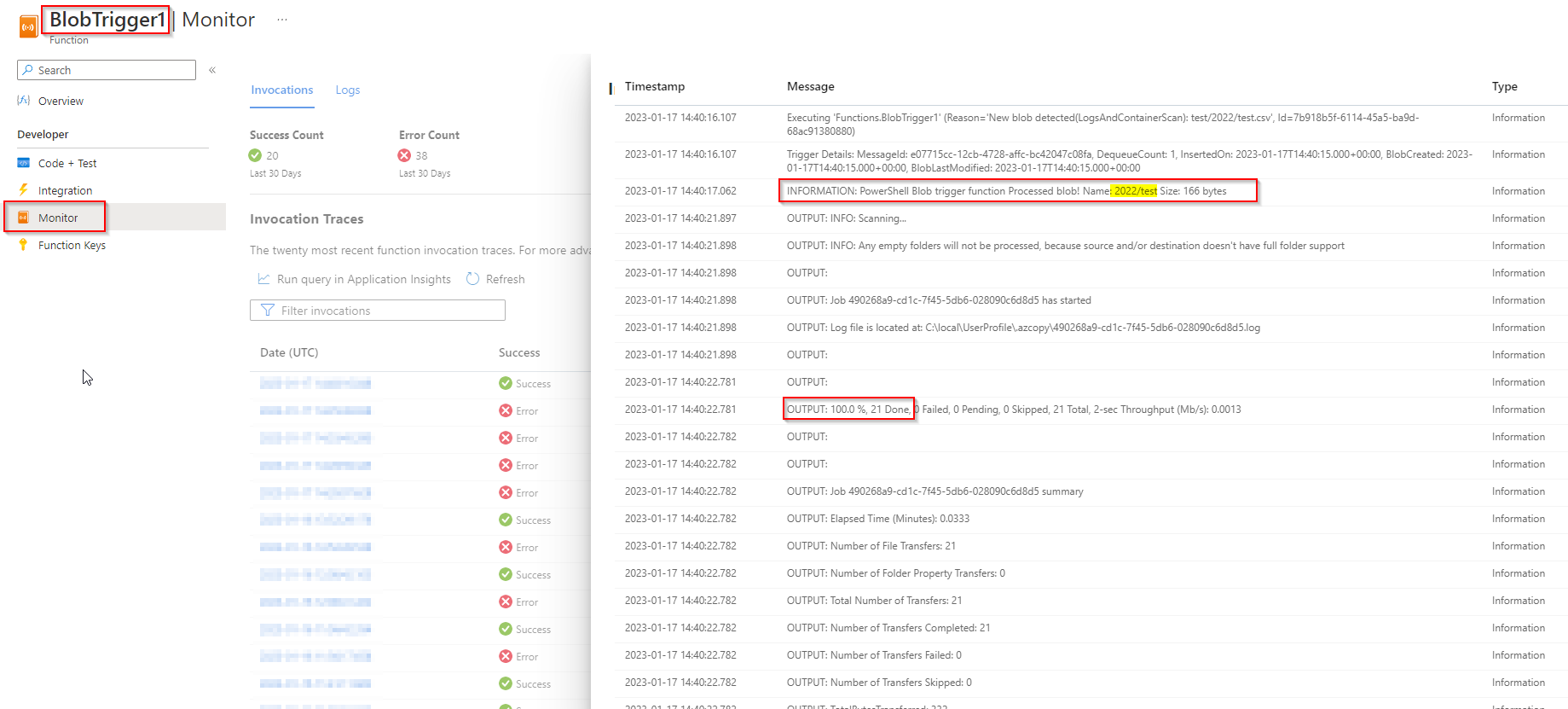

Under the Function, select the BlobTrigger1 (or your trigger name you specified) and locate the Monitor section under Developer.

Here we can review the execution logs and review any error outputs. In my example you can see the Event Trigger output and then the copy output which was successful.

Got a project that needs expert IT support?

From Linux and Microsoft Server to VMware, networking, and more, our team at CR Tech is here to help.

Get personalized support today and ensure your systems are running at peak performance or make sure that your project turns out to be a successful one!

CONTACT US NOW